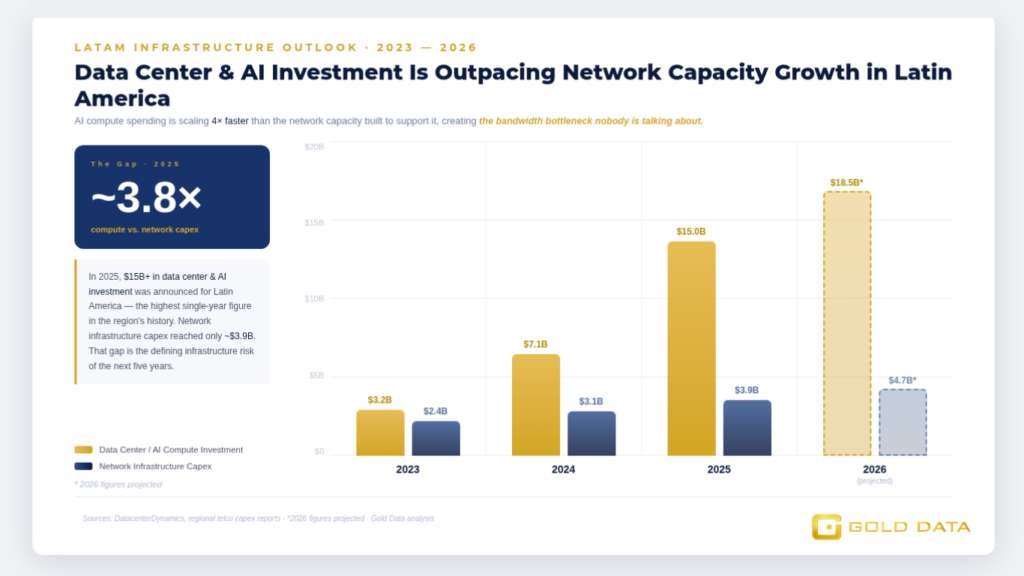

Everyone is talking about artificial intelligence in Latin America. Almost nobody is talking about the network infrastructure required to support it. That gap between AI ambition and connectivity reality is where enterprises are going to win or lose over the next five years.

Latin America’s AI Data Center Pipeline Surpassed $15B in Announced Investment in 2025

DatacenterDynamics tracked over $15 billion in announced data center investment targeting Latin America in 2025, with Mexico City, São Paulo, Bogotá, and Santiago emerging as the primary hyperscaler deployment hubs. Google, Microsoft, and Amazon have all made formal commitments to regional expansion, and several sovereign wealth funds and private equity firms have entered the market for the first time.

What the announcements do not capture is the network demand those data centers will generate. A hyperscale AI training facility does not just need power and cooling, it needs terabits of low-latency connectivity to its users, to other cloud regions, and to the submarine cables that link it to the global internet backbone.

That demand is landing on a regional network that was not designed to handle it. The gap between what AI infrastructure requires and what existing LATAM networks can deliver is the defining infrastructure challenge of the next five years.

The Stat That Matters

$15B+ in announced data center investment targeting Latin America in 2025 alone the highest single-year figure in the region’s history.

Capital flowing into compute infrastructure creates an immediate, proportional demand for network capacity. The network side of that equation remains underbuilt relative to what AI workloads will require.

Our Perspective

From our position as a regional network operator, we see the AI-connectivity gap playing out in real terms across our customer base. Enterprises that have migrated workloads to LATAM-based cloud regions are discovering that the latency and throughput performance they expected and that was promised in marketing materials depends heavily on the quality of the last-mile and middle-mile network connecting them to the data center. In many cases, that network was not built for the traffic profiles AI applications generate.

AI workloads are fundamentally different from traditional enterprise applications in how they consume network resources:

- Training large models requires sustained, high-throughput data transfer between storage and compute nodes.

- Inference workloads — the part that actually talks to users require ultra-low latency to deliver real-time responses.

Both demand network infrastructure that operates consistently at high utilization without degrading performance. That is a significantly higher bar than what most enterprise WAN architectures were designed to meet.

Gold Data has been building toward this moment. Our fiber routes across the Gulf of Mexico and Caribbean, combined with our IP transit capacity and data center interconnection capabilities, are positioned to deliver the kind of network performance that AI-intensive operations require. We are not retrofitting legacy infrastructure for a new use case. We built for this trajectory.

What This Means for Your Business

For enterprise teams deploying AI applications across Latin America whether in finance, energy, retail, or manufacturing the network layer is no longer a supporting character in your technology strategy. It is a primary determinant of whether your AI investments perform at the level your business case assumed.

Latency spikes during inference, throughput degradation during training data transfer, and routing instability during peak demand are all network problems that show up as AI problems in your board presentation.

The solution starts with an honest assessment of your current network architecture against the demands of the workloads you are actually running.

Gold Data’s team works with enterprises across LATAM to align network capacity with AI workload requirements. Start the conversation at golddata.net

Key Terms Explained

AI Inference — The process of running a trained AI model to generate responses or predictions in real time. Inference workloads are latency-sensitive — even milliseconds of delay are perceptible to users, making low-latency network connectivity a hard requirement.

AI Training — The computationally intensive process of teaching an AI model on large datasets. Training workloads require sustained high-bandwidth connectivity between storage systems and GPU clusters, often generating terabits of internal data transfer per training run.

Hyperscaler — A company that operates cloud infrastructure at massive scale primarily Google Cloud, Amazon Web Services, Microsoft Azure, and Meta. Their data center expansions in LATAM are the primary driver of regional AI infrastructure investment.

Last-Mile Connectivity — The final segment of the network path between a provider’s infrastructure and the enterprise customer’s premises. Last-mile performance is often the most variable and most overlooked factor in real-world application performance.

Content by: Claudia Tradardi · Head of Marketing & Media Relations · Gold Data claudia.tradardi@golddata.net · golddata.net